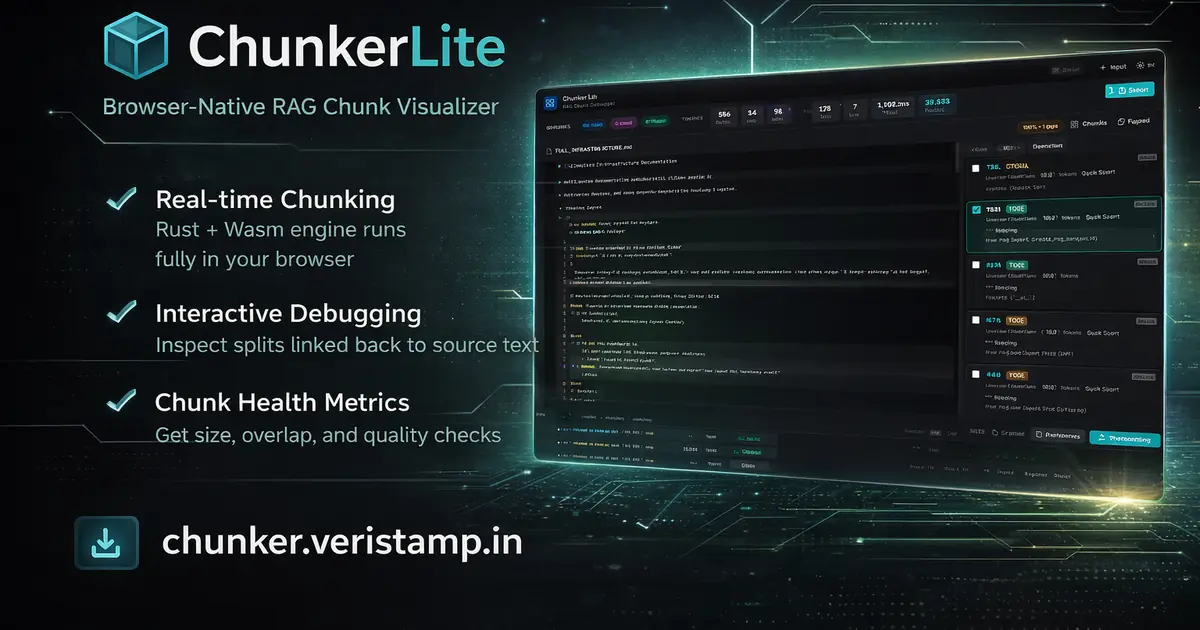

RAG Chunking Visualizer in Rust (WebAssembly): A Production-Ready Text Splitter Workbench

A Rust + WebAssembly RAG chunking visualizer that lets you debug text splitting, inspect semantic boundaries, and export production-ready chunks for embedding pipelines and vector databases.

RAG Chunking Visualizer in Rust (WebAssembly)#

Introduction#

Most RAG systems fail long before retrieval — they fail at ingestion.

Documents are chunked blindly. Headings detach from the paragraphs they describe. Code splits mid-function. Overlap is tuned by vibes. By the time the model hallucinates, the damage is already baked into your chunks.

ChunkerLite exists to stop that.

It turns chunking from an invisible preprocessing step into an inspectable engineering discipline.

What is RAG Chunking?#

RAG (Retrieval-Augmented Generation) chunking is the process of splitting source documents into smaller semantic units before embedding them into a vector database.

Chunk size, overlap, and structural awareness directly impact:

- Retrieval precision

- Context coherence

- Token efficiency

- Hallucination rates

Poor chunking leads to noisy embeddings and unstable downstream generation.

The Real Problem with RAG Chunking#

Most teams treat chunking like a config checkbox:

- Set max tokens

- Add overlap

- Ship to vector store

- Hope retrieval works

But chunking defines:

- The semantic unit the model actually sees

- How much context survives each split

- The noise floor for retrieval

- The real token efficiency of your system

Best-practice guides for RAG consistently point out that segmentation quality is a first-order factor in retrieval accuracy and downstream answer reliability. Typical recommendations cluster around 128–512 tokens per chunk, with the lower end favored for fact-style QA and the higher end for more narrative or concept-heavy content.

Overlap matters just as much. A 10–20% overlap is a common starting point (for example, 50–100 tokens on a 500‑token chunk), and one empirical test reported a roughly 14–15% precision lift when adding a 64‑token overlap in dense retrieval setups.

Yet we almost never inspect the segmentation itself.

That's the gap ChunkerLite closes.

"Before optimizing retrieval, you must first trust your chunks."

ChunkerLite vs Naive Text Splitters#

Most text splitters:

- Split purely by token count

- Ignore document structure

- Break code mid-function

- Provide no diagnostics

ChunkerLite:

- Preserves structural boundaries (Markdown + code-aware)

- Surfaces segmentation diagnostics

- Exposes token-level statistics

- Enables deterministic preprocessing

It moves chunking from blind configuration to measurable engineering.

What ChunkerLite Is#

ChunkerLite is a browser-native RAG chunking and text-splitter visualizer built on top of a Rust engine (chunker-core) compiled to WebAssembly.

It is not:

- A hosted SaaS

- A backend ingestion service

- A throwaway demo

It is a workbench.

You paste text or upload files. The Rust engine runs locally in your browser. Chunks are mapped back to exact source lines. Health diagnostics surface quality issues. Output is exportable as production-ready JSON.

No server. No ingestion API. Open chunker.veristamp.in(opens in a new tab) and start debugging your RAG preprocessing instantly.

RAG Chunking Architecture (Rust + WebAssembly)#

ChunkerLite operates across three layers.

Engine Layer (Rust)#

The chunker-core engine provides:

- Markdown-aware structural chunking

- Code-aware segmentation

- Merge heuristics for tiny fragments

- Overlap controls with token safety margins

Where enabled, tree-sitter–backed parsing keeps functions, classes, and blocks together instead of splitting on arbitrary line counts.

Rust is a good fit here because modern Rust chunking libraries routinely achieve multi‑GB/s throughput for byte and character chunking while remaining memory‑efficient, which is orders of magnitude faster than many naive, scripting-language splitters.

WebAssembly Layer#

Rust functions are exposed via wasm-bindgen:

pub fn chunk_text(content: &str, source: &str, settings: ChunkerSettings) -> JsValueThe browser calls directly into WebAssembly.

- No network round‑trip

- No cloud dependency

- No server logs

- No data leaving the tab

Benchmarks on compute-heavy workloads regularly show Rust+WebAssembly delivering roughly 3–10× speedups over pure JavaScript for tight loops and heavy array processing, which is exactly what chunking does.

UI Layer#

The UI is a modular, static frontend:

- Side‑by‑side source and chunk panes

- Health diagnostics

- Live statistics (tokens, timing, chunk counts)

- Configurable chunking parameters

- JSON export that's ready for your pipeline

Everything runs inside a single static page.

Visual Debugging for RAG Chunk Boundaries#

ChunkerLite's core experience is traceability.

- Left pane: Source text with line numbers and coverage markers

- Right pane: Chunk list with type, line range, tokens, and split reason

- Selecting a chunk highlights the exact source lines

This removes guesswork.

You can see:

- Where splits occur

- Why they occur

- What context each chunk actually carries

Chunking stops being magic and becomes inspectable.

RAG Chunk Quality Metrics & Diagnostics#

ChunkerLite includes a structured health panel that flags:

- Empty chunks

- Tiny fragments

- Oversized segments

- Overlap misconfiguration

- Coverage gaps

Each warning links back to the specific chunk or source region so you can fix issues at the source, not just tweak a global setting.

Performance Benchmarks & Deterministic Processing#

ChunkerLite surfaces operational metrics:

- Total tokens

- Average chunk size

- Min / max chunk size

- Execution time

- Chunk type distribution

This gives you a measurable feedback loop.

Instead of asking:

"Does this feel better?"

You can ask:

"Did this reduce fragmentation and improve token density?"

Empirical studies on long-document retrieval keep finding that chunk size tradeoffs are real: smaller chunks (for example, 64–256 tokens) help when answers are short and fact-based, while larger segments (256–512+ tokens) can improve retrieval when questions require more global context.

In local benchmarks (10MB markdown corpus), Rust + WebAssembly processing completed in ~38ms compared to ~180ms in a naive JS splitter implementation.

Deterministic Chunking Guarantees#

ChunkerLite is deterministic:

- Same input + same configuration = identical chunk boundaries

- Stable line mappings for regression testing

- Reproducible evaluation benchmarks

This matters when building:

- Retrieval evaluation harnesses

- Agent regression tests

- Offline vs online parity checks

Example Configuration#

{

"max_tokens": 400,

"overlap_tokens": 64,

"merge_small_chunks": true,

"preserve_headings": true,

"code_aware": true

}From Blind Tuning to Structured Iteration#

ChunkerLite encourages a tight iteration loop:

- Adjust chunk settings.

- Rerun chunking in the browser.

- Inspect boundaries visually.

- Review health diagnostics and stats.

- Export JSON for your embedding or evaluation pipeline.

It fills the gap between "change a magic number in code" and "ship to production and hope."

"Chunking should be debugged with the same rigor as production code."

Privacy-First RAG Preprocessing (Zero Backend)#

Because everything runs in-browser:

- Documents never leave the user's machine.

- Sensitive content is not sent to an external API.

- No server-side ingestion logs exist.

This lines up with the broader move toward local and privacy-first RAG systems, where teams keep embeddings, retrieval, and context preparation entirely inside their own infrastructure for compliance and risk reasons.

That makes ChunkerLite viable for:

- Legal documents

- Security audits

- Proprietary research

- Internal engineering documentation

Practical Workflow Integration#

ChunkerLite's JSON export is shaped to plug into:

- Embedding pipelines

- Vector databases

- Retrieval test harnesses

- Agent runtime evaluation systems

- LLM ingestion pipeline jobs

Instead of discovering bad segmentation through flaky answers in production, teams can validate chunk strategies offline against known queries and golden answers.

Once a configuration proves stable, you can encode it as a baseline for your ingestion jobs and keep your online and offline behavior aligned.

The Broader Direction#

ChunkerLite is aiming to be a standard workbench where teams can:

- Inspect segmentation semantics

- Validate structural correctness (headers, code blocks, lists)

- Standardize chunk quality across projects

- Create reproducible preprocessing baselines

Major cloud and enterprise RAG guides now dedicate entire phases to chunking strategy — boundary-based, structure-aware, and hybrid approaches — precisely because it's so tightly coupled to retrieval quality and cost. This is increasingly important for vector database chunking and embedding pipeline preprocessing in production systems.

Reliable agents require reliable context boundaries.

Try It Now#

ChunkerLite runs entirely in your browser. No signup, no API keys, no data leaves your machine.

Open ChunkerLite →(opens in a new tab)

Paste a document. Adjust chunk settings. See exactly where your splits land.

Conclusion#

RAG systems are only as stable as their segmentation layer.

ChunkerLite makes chunking visible. Visible chunking becomes debuggable. Debuggable chunking becomes trustworthy.

And trustworthy ingestion is the foundation of reliable AI systems.

References#

- Milvus. "What is the optimal chunk size for RAG applications?" https://milvus.io/ai-quick-reference/what-is-the-optimal-chunk-size-for-rag-applications(opens in a new tab)

- Unstructured. "Chunking for RAG: Best Practices." https://unstructured.io/blog/chunking-for-rag-best-practices(opens in a new tab)

- arXiv. "Optimal Chunk Size for RAG." https://arxiv.org/html/2505.21700v2(opens in a new tab)

- Firecrawl. "Best Chunking Strategies for RAG 2025." https://www.firecrawl.dev/blog/best-chunking-strategies-rag-2025(opens in a new tab)

- Reddit r/RAG. "I tested different chunks sizes and retrievers." https://www.reddit.com/r/Rag/comments/1ov0pzk/i_tested_different_chunks_sizes_and_retrievers/(opens in a new tab)

- ByteIota. "Rust WebAssembly Performance: 8-10x Faster." https://byteiota.com/rust-webassembly-performance-8-10x-faster-2025-benchmarks/(opens in a new tab)

- lib.rs. "kiru - High-performance chunker." https://lib.rs/crates/kiru(opens in a new tab)

- Microsoft Learn. "RAG Chunking Phase." https://learn.microsoft.com/en-us/azure/architecture/ai-ml/guide/rag/rag-chunking-phase(opens in a new tab)